: It uses a Transformer-based attention mechanism to build a performance prediction model for microservice nodes on a system's "critical path".

The file refers to the research paper titled " Transformer-based performance prediction and proactive resource allocation for cloud-native microservices ," published in Cluster Computing in August 2025.

You can find the full text or official citation through these platforms:

: Experimental results using the DeathStarBench benchmark showed that TPRAM can save at least 40.58% of CPU and 15.84% of memory resources while maintaining end-to-end Quality of Service (QoS). Accessing the Paper

Updated July 12th

HARDCORE Mode

> No Premium Shop

> Pure Skills + Collaboration

> Chaotic, Unbalanced, Untested

> Start From Level 1

> Only 1 Character Per IP/Player

> Server Reset = Progress Lost

> Extreme Gold, Luck, XP

> Warped Drop Rates

> Buy, Sell, Upgrade, Exchange Anywhere!

> PVE Death: Lose 1 level

> PVP Death: Lose 3 levels to the opponent

> PVP Death: Drop a slot item with ~10% chance

> PVP Death: Drop an inventory item with ~20% chance

> PVP Death: Lose an inventory item with ~5% chance

> PVP Death: Lose 80% of your gold

> Priest Heal's: Nerfed to 60%

> Trade: Receive 480K Gold Every 64 Seconds in the Beach Office

> Beach Office: Transport to anywhere

> You have to be within 10 levels to engage in PVP

> Tavern: Glitched

Take Screenshots

> Hardcore Mode is experimental, there is no long term progress saving yet, no leaderboards, so if you want to save your progress, show off your achievements, do take screenshots!

Provide Feedback

> Please send an email to

[email protected] about your experience in this new mode. I would like to hear about how you view Adventure Land, and whether this new Hardcore mode improves the game for you. When completed, the Hardcore mode will likely be a weekend-only thing. It will start on Friday, end on Sunday. I think there might be a lot of players who find loopholes etc. I've certainly improved some routines to prevent some scenarios. For example, depending on the feedback, I might add an NPC that tells where a certain player is for 10,000,000 gold etc., or, add 1-2 hours of peace time every 3-4 hours.

Boosters

Activation and Usage

> After pressing the "ACTIVATE" button. The booster item lasts 30 days. The booster item works from a character's inventory, it is not equipped or consumed. The effect can be observed from the "STATS" interface. The item can be transfered between characters or sold through merchanting.

Optimal Strategy

> Don't loot chests, keep the Booster in XP mode.

> While battling a boss, shift the Booster to Luck mode.

> When there are enough chests around, shift the Booster to Gold mode and loot.

> This way, you can benefit from all 3 bonuses with 1 stone.

> You can combine boosters as you combine accessories. The combination succeeds 100%, however, if you use a "Primordial Essence" for the combination, It triggers a proc-chance routine.

> You start with a 12% chance, as you succeed, your booster becomes a higher level booster, and the routine internally repeats with half the chance. So you can even receive an +5 booster, instead of an +1!

CODE

> You can use the activate and shift functions in CODE.

shift(0,'xpbooster')

shift(0,'luckbooster')

shift(0,'goldbooster')

> Above calls be used to shift a booster in 0th inventory slot.

> Implementing the optimal strategy is left as an exercise to the reader.

SHELLS

Where to Find

> You can get shells by farming green Goo's, from exchangeable items as rare rewards and through various other hidden and non-hidden ways in-game.

Original Plan

> Adventure Land aims to cover operating costs and generate long-term revenue by selling

SHELLS as a premium currency. It will be for cosmetic items, possibly extra bank storage and some rare account operations, like character transfers.

Keeping the game non-p2w is a top priority, so SHELLS won't affect in-game performance.

After Steam Early Access

> Adventure Land performs really well on Steam so far, as an indie-developer, one of my biggest concerns was being cash positive. If it continues like this, and I hope it does, it might even be possible to introduce cosmetics through in-game achievements and gold only.

Skillbar and Keymap

Skillbar and Configuration

> Skillbar is the small vertical bar you see on the right side of the screen, above the game logs. You can configure your skillbar through Code. Changes you make are saved and persisted for your Character, on your system locally.

set_skillbar("1","2","3","4","5","X","Y");

set_skillbar(["1","2","3","4","5","X","Y"]);

Keymap and Configuration

> Keymap is for mapping keypresses to skills, abilities and actions. Similar to the skillbar, you can change your keymap from Code, and the changes are persisted locally.

map_key("1","use_hp");

map_key("2","snippet","say('Woohoo')");

map_key("X","supershot");

unmap_key("X");

reset_mappings(); <- DEFAULTS THINGS

show_json(G.skills); <- CLICK TO RUN!

Mappable Keys

Snippets

> Snippets are small code pieces that are either evaluated inside your own Code, or on a blank runner if your Code isn't running.

map_key("Q","snippet","smart_move('winterland')"); <- CLICK TO TEST!

Mapping Items

> Items can be mapped manually to keys, or by dragging and dropping an item to a key slot. Pressing that key, activates, uses or equips the item.

map_key("SPACE",{"name":"stand0","type":"item"});

Map New Keys

> You can map to unmapped keys by including the `keycode` argument in your mappings. You can learn keycodes from:

keycode.info

map_key("DOT",{"name":"pure_eval","code":"ping()",keycode:190});

Advanced Usages

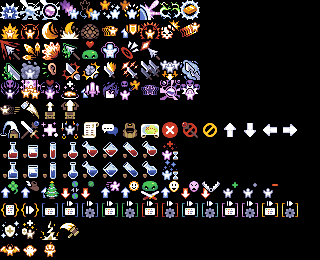

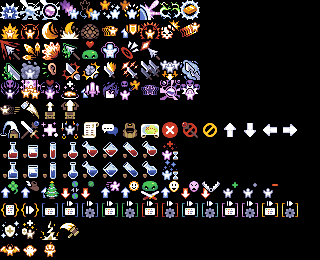

> You can override game's default keymappings, add new functionalities to keypresses using the "pure_eval" skill, unlike "snippet", "pure_eval" runs Javascript code inside the game window, so be careful using "pure_eval". You can change any rendered icon to one of your choosing. Ps. There's a list of icons in: G.skills.snippet.skins

//Example code that overrides ESC

Terms of Service

Privacy Policy

Thanks and Attributions

First of all, I want to thank all the players who played the game, provided feedback, endured, shared the joyous moments, kept pushing the game in the right direction.

I want to thank /r/mmorpg for being an open platform for mmorpg enthusiasts, I posted on /r/mmorpg at the end of 2016, and I've been improving and shaping the game with the small early adopter userbase ever since.

I want to thank Mark Jayson Lacandula, aka Json, I started working on the game in June 2016, launched very early around August 2016, he was one of the very first players who tried the game after seeing my tiny Adsense Ad. I didn't take him too seriously when he wanted to try my in-house map editor, but shared it with him anyway. After a very short time, he shared the first map he made, at that moment I knew I discovered a rare natural talent. He made all our maps, learned pixel art, extended our tilesets and sprites and kept exploring, he was and is always there, through the good times and the bad times. Game development is no easy task, contrary to popular belief, it's not rewarding, you rarely reap the benefits, up to this point, we didn't reap, but he never quit - so thank you Json. I hope after Adventure Land, you keep on working in the game industry. (Reader: If you are a talent hunter, do reach out to him) TpRam-Kelly.7z

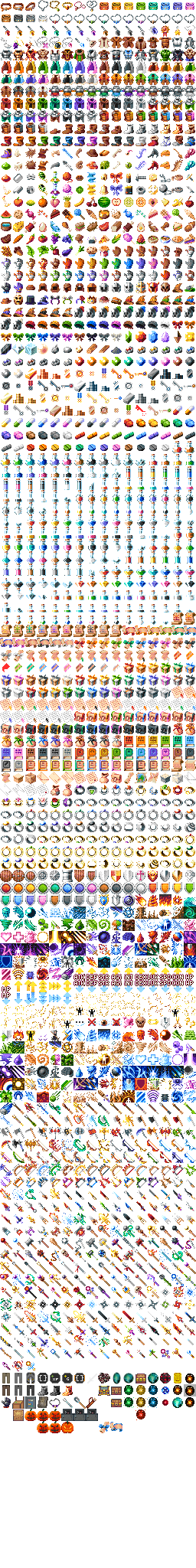

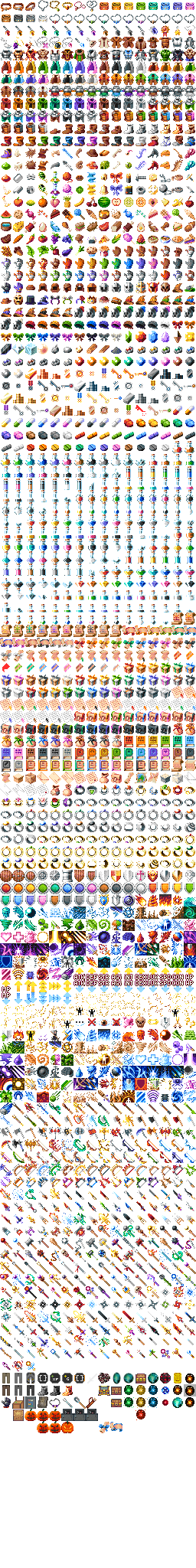

I want to thank Ellian, our freelance pixel artist, for being the most professional freelancer I've ever worked with. No one is more reliable than you. Adventure Land started with an off-the-shelf 16x16 icon pack, with Ellian, we created a custom 20x20 iconset, with the community we dreamed, Ellian made. Workload-wise, Adventure Land is a small-fish, but through 2 years, he was always there at a moment's notice. To extend our iconset 5-6 items at a time :) If I could do it all over again, maybe for Adventure Land 2, I'd love to create all the tilesets and sprites from scratch with Ellian.

I want to thank all the developers, artists, composers, whose libraries, assets and work I used in Adventure Land. : It uses a Transformer-based attention mechanism to

And thank you for reading, and hopefully for playing Adventure Land :)

[10/12/18]

I want to also thank Steam for being such an awesome platform, 1+ years now, it has been driving new players in, no advertisements or marketing.

Another thanks to Google Cloud for providing us startup credits, it really eased my financial burdens during this development stage. TpRam-Kelly.7z

[06/03/20]

There's one non-breakable main rule, you can't upset, bully, or sadden other players - Adventure Land thrives on being a positive game with a positive community. When you treat other players with love and respect, they'll treat you that way too!

The secondary rule is to not abuse the game, you can do pretty much everything with Code, and the game, we are even exploring allowing non-UI botting, but, please don't DDOS the game, or intentionally abuse bugs you find. We have lucrative bug bounties

Third rule is to respect the character limits, maintaining the game is beyond imagination costly, almost non-feasable, If everyone respects the limits, we won't be needing to add irritating captchas etc. to keep playing. While you might easily use a VPN or server to play with additional characters, please don't (It has been done in the past, at 2+ accounts, it usually gets noticed fast, you'll only force me to divert my time and energy into battling multiple accounts, please don't)

Selling items, gold etc. with real money is forbidden, don't waste your money, but maybe buy 1-2 cosmetic items to support the game :)

Offensive or insensitive nicknames are forbidden

Breaking the rules usually result in temporary bans, don't be afraid to try new things, as long as you mean well, there won't be any consequences

Have fun

This privacy policy explains how your data is used and stored by Adventure Land

Adventure Land uses Cookies for authentication and settings, cookies are stored between HTTP and HTTPS

Due to popular demand, and technical challenges, game can be played over both HTTP and HTTPS, over HTTP, if you are on a compromised network, your data will be exposed. If you are playing the game from Steam or Mac App Store, the game uses HTTPS by default

Adventure Land uses Google Analytics for statistics

Adventure Land uses Cloudflare for security and filtering, almost all HTTP/S data goes through Cloudflare, so they potentially access every data you provide

Adventure Land uses your location data to determine which game server is broadly closer to you

Adventure Land stores your encrypted password, characters, character names, data you provide, your IP (used extensively for the character limits logic), character actions and there are various logs and backups of these data, there's currently no system to delete these logs and backups, but as cloud storage is extremely expensive, I personally want to start deleting them in the future, but couldn't find the time yet

Adventure Land doesn't have a reliable way to send emails, so it's a players responsibility to keep themselves up-to-date on this privacy policy, but the main theme will never change, Adventure Land is a game with no intention of misusing a players data

I'm an indie developer doing my best to protect my players and meet their needs, but we live in a chaotic world, If you are a regular player reading this, please be careful, while I do everything to protect you and your data (especially more after everything I've experienced since I launched the game, which made me grow more as a developer and a human, at least I hope), nothing is safe on the Internet, always approach things with this fact in mind

For any questions: [email protected]

Last Edit [04/12/18]

Tpram-kelly.7z [720p 2025]

: It uses a Transformer-based attention mechanism to build a performance prediction model for microservice nodes on a system's "critical path".

The file refers to the research paper titled " Transformer-based performance prediction and proactive resource allocation for cloud-native microservices ," published in Cluster Computing in August 2025.

You can find the full text or official citation through these platforms:

: Experimental results using the DeathStarBench benchmark showed that TPRAM can save at least 40.58% of CPU and 15.84% of memory resources while maintaining end-to-end Quality of Service (QoS). Accessing the Paper

X

Save As

Load

Character

Default Code

Engage!

Disengage

XX:XX

X

CODE

COM

CHAR

INV

STATS

SKILLS

GUIDE

CODE

TRAVEL

TOWN

REWARDS

CONF

Music: OFF

Music: ON [Work in Progress]

SFX: OFF

SFX: ON [Work in Progress]

Performance Settings

Advanced Settings

You need to refresh the game for changes to be effective.

Tutorial: OFF

Tutorial: ON

Reset Tutorial [!]

Windows 7/8 Network Patch: ON

Windows 7/8 Network Patch: OFF

You need to refresh the game for changes to be effective.

$10 for

800 SHELLS

$25 [+8%]

2,160 SHELLS

$100 [+16%]

9,280 SHELLS

$500 [+24%]

49,600 SHELLS

About

SHELLS

Adventure Land uses

Stripe to handle payments, the leader in payments processing. Your credit card information never touches Adventure Land's servers.

SHELLS are Adventure Land's rare, purchasable currency. Unlike many other games, you can find SHELLS in-game too. They drop from gems and monsters. They are rare.

This creates equal opportunities for all players. You can only purchase non-essentials with SHELLS, like cosmetics and extra bank storage, to ensure the game is not pay-to-win.

You can support Adventure Land's development by buying SHELLS and hopefully enjoy the game more, faster, to your hearts desire!

Alternative Payments

Offers

Adventure Land uses

Paymentwall for alternative payments and offers. There are various localised payment methods, and offers to receive free SHELLS.